Stack frame: Difference between revisions

imported>Pat Palmer m (→How a stack frame works: repairing ref) |

Pat Palmer (talk | contribs) mNo edit summary |

||

| (13 intermediate revisions by 4 users not shown) | |||

| Line 1: | Line 1: | ||

In [[computer science]], a '''stack frame''' is a [[memory management]] strategy used to create and destroy temporary (automatic) [[variable]]s in | {{subpages}} | ||

In [[computer science]], a '''stack frame''' is a [[memory management]] strategy used to create and destroy temporary (automatic) [[variable]]s in ''procedural'' programming languages. Among other things, use of a [[stack]] allows programming languages to allow [[recursive]] calling of subroutines. Stack frames only exist at [[run-time]]. | |||

When a program runs, it is called a [[process]], and it starts with one [[thread]] (which may create additional threads). Stacks are per thread, and each thread's [[stack]] adds a new stack ''frame'' whenever a procedure (or function or subroutine or method) is invoked. Each stack frame contains space for [[actual parameter|actual parameters]], [[local variables]], temporary locations, and (in some architectures) information about the [[calling context]] such as the memory address of the calling subroutine<ref name="GnuCstack">{{cite web|url=http://gcc.gnu.org/onlinedocs/gcc-3.2.3/gccint/Stack-and-Calling.html#Stack%20and%20Calling|title=Stack Layout and Calling Conventions (Gnu GCC version 3.2.3)|publisher=Free Software Foundation, Inc.|year=2002|accessdate=2007-04-27}}</ref>. When the called procedure finishes executing, its stack frame is removed from the stack, and thread execution resumes back in the calling procedure. | |||

The exact format and contents when "packing the stack" (i.e., copying things onto it) during subroutine calls can vary by the programming language in use. | |||

=Overview= | =Overview= | ||

Many architectures provide special [[hardware]] and [[operating system]] features for stack frames. Although many different programming languages make use of stack frames, not all [[compilers]] ''pack the stack'' (i.e., organize the contents of each stack frame) in the same way, and this complicates calling between programs compiled with different compilers. For example, the success of the Pascal programming language was handicapped because it used the stack differently than C and C++, making it difficult for Pascal programs to call programs written in C or C++. | Many architectures provide special [[hardware]] and [[operating system]] features for stack frames. Although many different programming languages make use of stack frames, not all [[compiler|compilers]] ''pack the stack'' (i.e., organize the contents of each stack frame) in the same way, and this complicates calling between programs compiled with different compilers. For example, the success of the Pascal programming language was handicapped because it used the stack differently than C and C++, making it difficult for Pascal programs to call programs written in C or C++. | ||

The order of packing information in the stack frame may or may not be part of the programming language specification. For example, on Solaris (Unix) systems, the GNU [[C_programming_language|C]] [[compiler]] and the [[Sun Microsystems|Sun]] C compiler pack the stack in slightly different ways; prior to 2007, it was necessary to compile two programs with the same compiler if they were to [[linking loader|link]] (call across programs at runtime) with each other at runtime<ref name="GccSun">{{cite web|url=http://forum.java.sun.com/thread.jspa?threadID=5105358&tstart=75|title="gcc & glibc & ABI compatibility?" in Developer Forum, Sun Developer Network|publisher=Sun Microsystems|year=2006| | The order of packing information in the stack frame may or may not be part of the programming language specification. For example, on Solaris (Unix) systems, the GNU [[C_programming_language|C]] [[compiler]] and the [[Sun Microsystems|Sun]] C compiler pack the stack in slightly different ways; prior to 2007, it was necessary to compile two programs with the same compiler if they were to [[linking loader|link]] (call across programs at runtime) with each other at runtime<ref name="GccSun">{{cite web|url=http://forum.java.sun.com/thread.jspa?threadID=5105358&tstart=75|title="gcc & glibc & ABI compatibility?" in Developer Forum, Sun Developer Network|publisher=Sun Microsystems|year=2006| | ||

accessdate=2007-04-27}}</ref>. Other languages, such as [[Java programming language|Java]] (which runs in a special [[runtime environment]] independent of the underlying operating system), do specify exactly how the stack contents are organized, and so compiler incompatibities of the sort that C has do not arise in [[Java programming language|Java]]. | accessdate=2007-04-27}}</ref>. Other languages, such as [[Java programming language|Java]] (which runs in a special [[runtime environment]] independent of the underlying operating system), do specify exactly how the stack contents are organized, and so compiler incompatibities of the sort that C has do not arise in [[Java programming language|Java]]. | ||

In a computer [[operating system]], a special program, sometimes called a [[linking loader]], launches programs by creating a [[process]] (a set of special [[data structures]] by which an operating system tracks the running of a program) which contains a very large [[virtual memory]] address space. Each process may contain one or more [[thread]]s, and each thread will have its own stack and [[program counter]]. Each stack is typically assigned a fairly large region in the virtual address space of the enclosing process. If a stack grows to fill its currently allocated address space, the operating system may be able to allocate additional chunks of contiguous virtual memory space--if contiguous address space is still unused<ref name="AppleStack">{{cite web|url=http://developer.apple.com/qa/qa2005/qa1419.html|title=Technical Q&A QA1419 (on allocated stack space in Mac OS X) in Apple Developer Network|publisher=Apple Computer|year=2005| | In a computer [[operating system]], a special program, sometimes called a [[linking loader]], launches programs by creating a [[process]] (a set of special [[data structure|data structures]] by which an operating system tracks the running of a program) which contains a very large [[virtual memory]] address space. Each process may contain one or more [[thread]]s, and each thread will have its own stack and [[program counter]]. Each stack is typically assigned a fairly large region in the virtual address space of the enclosing process. If a stack grows to fill its currently allocated address space, the operating system may be able to allocate additional chunks of contiguous virtual memory space--if contiguous address space is still unused<ref name="AppleStack">{{cite web|url=http://developer.apple.com/qa/qa2005/qa1419.html|title=Technical Q&A QA1419 (on allocated stack space in Mac OS X) in Apple Developer Network|publisher=Apple Computer|year=2005| | ||

accessdate=2007-04-27}}</ref>. But if too many subroutine calls occur (due perhaps to an infinite loop or to recursive calling that continues too long), the stack may not be able to continue to grow and grab more address space. At this point, the operating system will terminate the process with an error. | accessdate=2007-04-27}}</ref>. But if too many subroutine calls occur (due perhaps to an infinite loop or to recursive calling that continues too long), the stack may not be able to continue to grow and grab more address space. At this point, the operating system will terminate the process with an error. | ||

=How a stack frame works= | =How a stack frame works= | ||

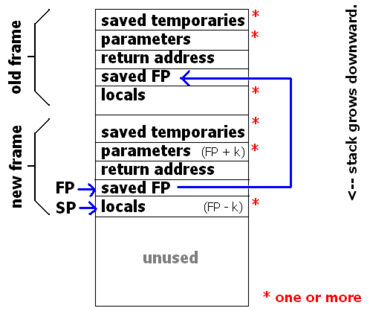

[[Image:Stackframe.png|thumb|370px|right|Contents of a stack frame from a SPARC system (Solaris). Shown are two frames (a function that has called another function). Blue arrows are pointers. Parameters and locals can be addressed as FP ± k. NOTE: Intel/Windows stacks grow upward<ref name="BryantOhallaron">{{cite book|url=http://www.amazon.com/Computer-Systems-Programmers-Randal-Bryant/dp/013034074X|title="Computer Systems: A Programmer's Perspective" by Randal Bryant and David O'Hallaron © 2003 Prentice Hall. See p. 171.|publisher=Prentice Hall|accessdate=2007-05-03}}</ref>.]] | [[Image:Stackframe.png|thumb|370px|right|Contents of a stack frame from a [[SPARC]] system ([[Sun Solaris]]). Shown are two frames (a function that has called another function). Blue arrows are pointers. Parameters and locals can be addressed as FP ± k. NOTE: Intel/Windows stacks grow upward<ref name="BryantOhallaron">{{cite book|url=http://www.amazon.com/Computer-Systems-Programmers-Randal-Bryant/dp/013034074X|title="Computer Systems: A Programmer's Perspective" by Randal Bryant and David O'Hallaron © 2003 Prentice Hall. See p. 171.|publisher=Prentice Hall|accessdate=2007-05-03}}</ref>.]] | ||

To use a stack frame, a thread keeps two [[pointer|pointers]], often called the ''Stack Pointer'' (SP), and the ''Frame'' (FP) | To use a stack frame, a thread keeps two [[pointer|pointers]], often called the ''Stack Pointer'' (SP), and the ''Frame Pointer'' (FP) (a.k.a. ''Base Pointer''). The SP always points to the "top" of the stack, and the FP always points to the "top" of the frame for this call. Additionally, the thread also maintains a [[program counter|program counter (PC)]] which points to the next instruction to be executed. Then, whenever a function call takes place, the following steps take place in roughly this order: | ||

# The caller saves local variables and temporaries, by pushing them onto the stack. | # The caller saves local variables and temporaries, by copying (pushing) them onto the stack. | ||

# The caller pushes the callee's actual parameters onto the stack. | # The caller pushes the callee's actual parameters onto the stack. | ||

# The caller branches to the callee, pushing PC onto the stack (on most architectures, this is a single instruction called ''CALL''). When on the stack, the saved PC is called the ''return address''. | # The caller branches to the callee, pushing PC onto the stack (on most architectures, this is a single instruction called ''CALL''). When on the stack, the saved PC is called the ''return address''. | ||

| Line 38: | Line 44: | ||

Another notable variant of the stack frame is the use of [[canary value|canary values]] to thwart [[buffer overflow attack|buffer overflow attacks]]. | Another notable variant of the stack frame is the use of [[canary value|canary values]] to thwart [[buffer overflow attack|buffer overflow attacks]]. | ||

=Closures in programming languages= | =Closures in programming languages= | ||

| Line 56: | Line 59: | ||

Can produce undefined results, since it returns a pointer to a memory location that will be reused by other functions. After a few function calls, it is unlikely that the return value will point to the integer 4. It may seem like other high-level languages, such as [[Java programming language|Java]] or [[C sharp (programming language)|C#]], allow this, but they do not; their local variables are actually pointers to objects, and so one can return the object, but cannot return a pointer to the local variable. | Can produce undefined results, since it returns a pointer to a memory location that will be reused by other functions. After a few function calls, it is unlikely that the return value will point to the integer 4. It may seem like other high-level languages, such as [[Java programming language|Java]] or [[C sharp (programming language)|C#]], allow this, but they do not; their local variables are actually pointers to objects, and so one can return the object, but cannot return a pointer to the local variable. | ||

However, some languages, such as [[Ruby programming language|Ruby]] or [[Standard ML (programming language|Standard ML]] support closures. In Ruby, this example | However, some languages, such as [[Ruby programming language|Ruby]] or [[Standard ML (programming language|Standard ML]] support [[Closure_(computer_science)|closures]]. In Ruby, this example | ||

<code> | <code> | ||

| Line 78: | Line 81: | ||

=References= | =References= | ||

<references /> | <references /> | ||

Latest revision as of 11:37, 6 February 2024

In computer science, a stack frame is a memory management strategy used to create and destroy temporary (automatic) variables in procedural programming languages. Among other things, use of a stack allows programming languages to allow recursive calling of subroutines. Stack frames only exist at run-time.

When a program runs, it is called a process, and it starts with one thread (which may create additional threads). Stacks are per thread, and each thread's stack adds a new stack frame whenever a procedure (or function or subroutine or method) is invoked. Each stack frame contains space for actual parameters, local variables, temporary locations, and (in some architectures) information about the calling context such as the memory address of the calling subroutine[1]. When the called procedure finishes executing, its stack frame is removed from the stack, and thread execution resumes back in the calling procedure.

The exact format and contents when "packing the stack" (i.e., copying things onto it) during subroutine calls can vary by the programming language in use.

Overview

Many architectures provide special hardware and operating system features for stack frames. Although many different programming languages make use of stack frames, not all compilers pack the stack (i.e., organize the contents of each stack frame) in the same way, and this complicates calling between programs compiled with different compilers. For example, the success of the Pascal programming language was handicapped because it used the stack differently than C and C++, making it difficult for Pascal programs to call programs written in C or C++.

The order of packing information in the stack frame may or may not be part of the programming language specification. For example, on Solaris (Unix) systems, the GNU C compiler and the Sun C compiler pack the stack in slightly different ways; prior to 2007, it was necessary to compile two programs with the same compiler if they were to link (call across programs at runtime) with each other at runtime[2]. Other languages, such as Java (which runs in a special runtime environment independent of the underlying operating system), do specify exactly how the stack contents are organized, and so compiler incompatibities of the sort that C has do not arise in Java.

In a computer operating system, a special program, sometimes called a linking loader, launches programs by creating a process (a set of special data structures by which an operating system tracks the running of a program) which contains a very large virtual memory address space. Each process may contain one or more threads, and each thread will have its own stack and program counter. Each stack is typically assigned a fairly large region in the virtual address space of the enclosing process. If a stack grows to fill its currently allocated address space, the operating system may be able to allocate additional chunks of contiguous virtual memory space--if contiguous address space is still unused[3]. But if too many subroutine calls occur (due perhaps to an infinite loop or to recursive calling that continues too long), the stack may not be able to continue to grow and grab more address space. At this point, the operating system will terminate the process with an error.

How a stack frame works

To use a stack frame, a thread keeps two pointers, often called the Stack Pointer (SP), and the Frame Pointer (FP) (a.k.a. Base Pointer). The SP always points to the "top" of the stack, and the FP always points to the "top" of the frame for this call. Additionally, the thread also maintains a program counter (PC) which points to the next instruction to be executed. Then, whenever a function call takes place, the following steps take place in roughly this order:

- The caller saves local variables and temporaries, by copying (pushing) them onto the stack.

- The caller pushes the callee's actual parameters onto the stack.

- The caller branches to the callee, pushing PC onto the stack (on most architectures, this is a single instruction called CALL). When on the stack, the saved PC is called the return address.

- The callee pushes the value of FP onto the stack.

- The callee copies SP to FP.

- The callee adjusts SP, creating storage locations for local variables and local temporaries on the stack.

Steps 4--6 above are referred to as the function prologue, since they are the beginning of every function.

Within the body of the callee function, formal parameters and local variables can all be accessed at an address relative to the frame pointer. Because of this, a function may recurse, and automatically create a different storage location for each of its local variables.

Upon exit from the function, those steps are performed in reverse:

- The callee restores SP, and in doing so destroys the storage locations reserved for locals and temporaries.

- The callee restores FP, and in doing so returns to the previous frame.

- The callee branches back to caller by popping PC off of the stack (on most architectures, this is a single instruction called RETURN).

- The caller removes the actual parameters from the stack.

- The caller resotres local variables and temporaries, by popping them from the stack.

Steps 1--3 are referred to as the function epilogue, since they are at the end of every function.

Depending on the Application Binary Interface (ABI) of the particular architecture and platform, these steps may be performed in slightly different order. For instance, some systems will save temporaries in two groups---those saved by the caller, and those by the callee. Similarly, on some platforms, the program counter may be saved onto a different stack. Many other variants are possible.

Another notable variant of the stack frame is the use of canary values to thwart buffer overflow attacks.

Closures in programming languages

Stack frames assume that the lifetime of variables is delimited by the function which declares them, which is to say that after a function has returned, its local variables no longer exist. This is not the case for languages which support closures. For instance, in the C programming language,

int *badFunction(void)

{

int localVar = 4;

return &localVar;

}

Can produce undefined results, since it returns a pointer to a memory location that will be reused by other functions. After a few function calls, it is unlikely that the return value will point to the integer 4. It may seem like other high-level languages, such as Java or C#, allow this, but they do not; their local variables are actually pointers to objects, and so one can return the object, but cannot return a pointer to the local variable.

However, some languages, such as Ruby or Standard ML support closures. In Ruby, this example

def goodFcn()

i = 4

return Proc { return i }

end

Returns a closure that can access the local variable i, even after goodFcn() has terminated. As a result, languages such as these must employ more memory management infrastructure than a stack frame.

Security and "stack smashing" attacks

A stack smashing attack is a special case of a buffer overflow attack. Stack smashing attacks take advantage of the stack frame by assuming two things:

- Both program data (variables) and control flow information (saved program counter) are stored on the stack (as in the common x86 architecture), and

- The stack grows sequentially in contiguous memory (either from high to low addresses, or vice versa).

It is thus sometimes possible to craft a special input which will trick a poorly written program into voluntarily overwriting its control flow information, thus taking control of the executing program. When excess information is written to sequential addresses in a stack frame, it will eventually reach control flow information on the stack and overwrite it with a new return address, which can then jump to malicious code already written elsewhere in the stack. In this fashion, viruses can bootstrap themselves in a running process. This vulnerability is especially important if a machine executes programs downloaded over a network.

References

- ↑ Stack Layout and Calling Conventions (Gnu GCC version 3.2.3). Free Software Foundation, Inc. (2002). Retrieved on 2007-04-27.

- ↑ "gcc & glibc & ABI compatibility?" in Developer Forum, Sun Developer Network. Sun Microsystems (2006). Retrieved on 2007-04-27.

- ↑ Technical Q&A QA1419 (on allocated stack space in Mac OS X) in Apple Developer Network. Apple Computer (2005). Retrieved on 2007-04-27.

- ↑ "Computer Systems: A Programmer's Perspective" by Randal Bryant and David O'Hallaron © 2003 Prentice Hall. See p. 171.. Prentice Hall. Retrieved on 2007-05-03.